RAC Administration

I am only going to talk about RAC administration, if you need Oracle administration then see my Oracle section.

It is recommended that the spfile (binary parameter file) is shared between all nodes within the cluster, but it is possible that each instance can have its own spfile. The parameters can be grouped into three categories

| Unique parameters | These parameters are unique to each instance, examples would be instance_name, thread and undo_tablespace |

| Identical parameters | Parameters in this category must be the same for each instance, examples would be db_name and control_file |

| Neither unique or identical parameters | parameters that are not in any of the above, examples would be db_cache_size, large_pool_size, local_listener and gcs_servers_processes |

The main unique parameters that you should know about are

The identical unique parameters that you should know about are below you can use the below query to view all of them

select name, isinstance_modifiable from v$parameter where isinstance_modifiable = 'false' order by name;

| syntax for parameter file | <instance_name>.<parameter_name>=<parameter_value> inst1.db_cache_size = 1000000 *.undo_management=auto |

| example | alter system set db_2k_cache_size=10m scope=spfile sid='inst1'; |

Starting and Stopping Instances

The srvctl command is used to start/stop an instance, you can also use sqlplus to start and stop the instance

| start all instances | srvctl start database -d <database> -o <option> |

| stop all instances | srvctl stop database -d <database> -o <option> Note: the listeners are not stopped, you can use the -o option to specify startup/shutdown options, see below for options immediate abort normal transactional |

| start/stop particular instance | srvctl [start|stop] database -d <database> -i <instance>,<instance> |

To recap on undo management you can see my undo section, instances in a RAC do not share undo, they each have a dedicated undo tablespace. Using the undo_tablespace parameter each instance can point to its own undo tablespace

| undo tablespace | instance1.undo_tablespace=undo_tbs1 instance2.undo_tablespace=undo_tbs2 |

With todays Oracle you should be using automatic undo management, again I have a detailed discussion on AUM in my undo section.

I have already discussed temporary tablespace's, in a RAC environment you should setup a temporary tablespace group, this group is then used by all instances of the RAC. Each instance creates a temporary segment in the temporary tablespace it is using. If an instance is running a large sort, temporary segments can be reclaimed from segments from other instances in that tablespace.

| useful views | gv$sort_segment - explore current and maximum sort segment usage statistics (check columns freed_extents, free_requests ,if they grow increase tablespace size) gv$tempseg_usage - explore temporary segment usage details such as name, SQL, etc v$tempfile - identify - temporary datafiles being used for the temporary tablespace |

I have already discussed redologs, in a RAC environment every instance has its own set of redologs. Each instance has exclusive write access to its own redologs, but each instance can read each others redologs, this is used for recovery. Redologs are located on the shared storage so that all instances can have access to each others redologs. The process is a little different to the standard Oracle when changing the archive mode

| archive mode (RAC) | SQL> alter system set cluster_database=false scope=spfile sid='prod1'; srvctl stop database -d <database> SQL> startup mount SQL> alter database archivelog; SQL> alter system set cluster_database=true scope=spfile sid='prod1'; SQL> shutdown; srvctl start database -d prod |

Again I have already talked about flashback, there is no difference in RAC environment apart from the setting up

| flashback (RAC) | ## Make sure that the database is running in archive log mode SQL> archive log list ## Setup the flashback SQL> alter system set cluster_database=false scope=spfile sid='prod1'; SQL> alter system set DB_RECOVERY_FILE_DEST_SIZE=200M scope=spfile; SQL> alter system set DB_RECOVERY_FILE_DEST='/ocfs2/flashback' scope=spfile; srvctl stop database -p prod1 SQL> startup mount SQL> alter database flashback on; SQL> shutdown; srvctl start database -p prod1 |

We have already come across the srvctl above, this command is called the server control utility. It can divided into two categories

Oracle stores database configuration in a repository, the configuration is stored in the Oracle Cluster Registry (OCR) that was created when RAC was installed, it will be located on the shared storage. Srvctl uses CRS to communicate and perform startup and shutdown commands on other nodes.

I suggest that you lookup the command but I will provide a few examples

| display the registered databases | srvctl config database |

| status | srvctl status database -d <database |

| stopping/starting | srvctl stop database -d <database> |

| adding/removing | srvctl add database -d <database> -o <oracle_home> srvctl add instance -d <database> -i <instance> -n <node> srvctl add service -d <database> -s <service> -r <preferred_list> srvctl add nodeapps -n <node> -o <oracle_home> -A <name|ip>/network srvctl add asm -n <node> -i <asm_instance> -o <oracle_home> srvctl remove database -d <database> -o <oracle_home> srvctl remove instance -d <database> -i <instance> -n <node> srvctl remove service -d <database> -s <service> -r <preferred_list> srvctl remove nodeapps -n <node> -o <oracle_home> -A <name|ip>/network srvctl asm remove -n <node> |

Services are used to manage the workload in Oracle RAC, the important features of services are

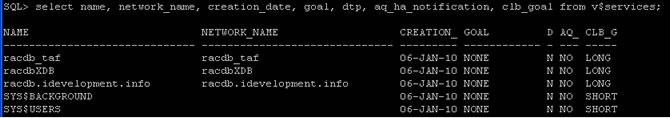

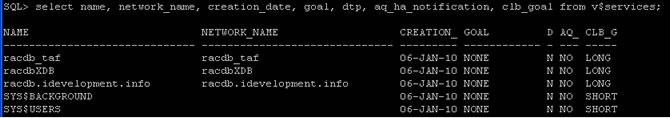

The view v$services contains information about services that have been started on that instance, here is a list from a fresh RAC installation

The table above is described below

You can administer services using the following tools

Two services are created when the database is first installed, these services are running all the time and cannot be disabled.

| add | srvctl add service -d D01 -s BATCH_SERVICE -r node1,node2 -a node3 Note: the options are describe below -d - database -s - the service -r - the service will running on the these nodes -a - if nodes in the -r list are not running then run on this node |

| remove | srvctl remove service -d D01 -s BATCH_SERVICE |

| start | srvctl start service -d D01 -s BATCH_SERVICE |

| stop | srvctl stop service -d D01 -s BATCH_SERVICE |

| status | srvctl status service -d D10 -s BATCH_SERVICE |

| service (example) | ## create the JOB class ## create a job associated with a job class |

CRS is Oracle's clusterware software, you can use it with other third-party clusterware software, though it is not required (apart from HP True64).

CRS is start automatically when the server starts, you should only stop this service in the following situations

CRS Administration |

|

| starting | ## Starting CRS using Oracle 10g R1 ## Starting CRS using Oracle 10g R2 |

| stopping | ## Stopping CRS using Oracle 10g R1 srvctl stop -d database <database> srvctl stop asm -n <node> srvctl stop nodeapps -n <node> /etc/init.d/init.crs stop ## Stopping CRS using Oracle 10g R2 $ORA_CRS_HOME/bin/crsctl stop crs |

| disabling/enabling | ## stop CRS restarting after a reboot, basically permanent over reboots ## Oracle 10g R1 /etc/init.d/init.crs [disable|enable] ## Oracle 10g R2 $ORA_CRS_HOME/bin/crsctl [disable|enable] crs |

| checking | $ORA_CRS_HOME/bin/crsctl check crs $ORA_CRS_HOME/bin/crsctl check evmd $ORA_CRS_HOME/bin/crsctl check cssd $ORA_CRS_HOME/bin/crsctl check crsd $ORA_CRS_HOME/bin/crsctl check install -wait 600 |

Resource Applications (CRS Utilities) |

|

| status | $ORA_CRS_HOME/bin/crs_stat $ORA_CRS_HOME/bin/crs_stat -t $ORA_CRS_HOME/bin/crs_stat -ls $ORA_CRS_HOME/bin/crs_stat -p Note: -t more readable display -ls permission listing -p parameters |

| create profile | $ORA_CRS_HOME/bin/crs_profile |

| register/unregister application | $ORA_CRS_HOME/bin/crs_register $ORA_CRS_HOME/bin/crs_unregister |

| Start/Stop an application | $ORA_CRS_HOME/bin/crs_start $ORA_CRS_HOME/bin/crs_stop |

| Resource permissions | $ORA_CRS_HOME/bin/crs_getparam $ORA_CRS_HOME/bin/crs_setparam |

| Relocate a resource | $ORA_CRS_HOME/bin/crs_relocate |

Nodes |

|

| member number/name | olsnodes -n Note: the olsnodes command is located in $ORA_CRS_HOME/bin |

| local node name | olsnodes -l |

| activates logging | olsnodes -g |

Oracle Interfaces |

|

| display | oifcfg getif |

| delete | oicfg delig -global |

| set | oicfg setif -global <interface name>/<subnet>:public oicfg setif -global <interface name>/<subnet>:cluster_interconnect |

Global Services Daemon Control |

|

| starting | gsdctl start |

| stopping | gsdctl stop |

| status | gsdctl status |

Cluster Configuration (clscfg is used during installation) |

|

| create a new configuration | clscfg -install Note: the clscfg command is located in $ORA_CRS_HOME/bin |

| upgrade or downgrade and existing configuration | clscfg -upgrade clscfg -downgrade |

| add or delete a node from the configuration | clscfg -add clscfg -delete |

| create a special single-node configuration for ASM | clscfg -local |

brief listing of terminology used in the other nodes |

clscfg -concepts |

| used for tracing | clscfg -trace |

| help | clscfg -h |

Cluster Name Check |

|

| print cluster name | cemutlo -n Note: in Oracle 9i the ulity was called "cemutls", the command is located in $ORA_CRS_HOME/bin |

| print the clusterware version | cemutlo -w Note: in Oracle 9i the ulity was called "cemutls" |

Node Scripts |

|

| Add Node | addnode.sh Note: see adding and deleting nodes |

| Delete Node | deletenode.sh Note: see adding and deleting nodes |

As you already know the OCR is the registry that contains information

The file location is specified during the installation, the file pointer indicating the OCR device location is the ocr.loc, this can be in either of the following

The file contents look something like below, this was taken from my installation

| orc.loc | ocrconfig_loc=/u02/oradata/racdb/OCRFile ocrmirrorconfig_loc=/u02/oradata/racdb/OCRFile_mirror local_only=FALSE |

OCR is import to the RAC environment and any problems must be immediately actioned, the command can be found in located in $ORA_CRS_HOME/bin

OCR Utilities |

|

| log file | $ORA_HOME/log/<hostname>/client/ocrconfig_<pid>.log |

| checking | ocrcheck Note: will return the OCR version, total space allocated, space used, free space, location of each device and the result of the integrity check |

| dump contents | ocrdump Note: by default it dumps the contents into a file named OCRDUMPFILE in the current directory |

| export/import | ocrconfig -export <file> ocrconfig -restore <file> |

| backup/restore | # show backups ocrconfig -showbackup # to change the location of the backup, you can even specify a ASM disk ocrconfig -backuploc <path|+asm> # perform a backup, will use the location specified by the -backuploc location ocrconfig -manualbackup # perform a restore ocrconfig -restore <file> # delete a backup orcconfig -delete <file> Note: there are many more option so see the ocrconfig man page |

| add/remove/replace | ## add/relocate the ocrmirror file to the specified location ocrconfig -replace ocrmirror '/ocfs2/ocr2.dbf' ## relocate an existing OCR file ocrconfig -replace ocr '/ocfs1/ocr_new.dbf' ## remove the OCR or OCRMirror file ocrconfig -replace ocr ocrconfig -replace ocrmirror |

The voting disk as I mentioned in the architecture is used to resolve membership issues in the event of a partitioned cluster, the voting disk protects data integrity.

| querying | crsctl query css votedisk |

| adding | crsctl add css votedisk <file> |

| deleting | crsctl delete css votedisk <file> |

| Previous | Menu | Next |